Congressional Republicans Push Bills That Would Block Kids Access To Content For Ideological Reasons

from the how-is-this-protecting-kids? dept

Should parents have a right to monitor and control which sites and apps their kids use? Today, parents do have that legal right under the Children’s Online Privacy Protection Act (COPPA). The 1998 law requires verifiable parental consent before websites or apps can collect, use or share personal information from teens 13 or under. In practice, that means parents must consent for all social media apps.

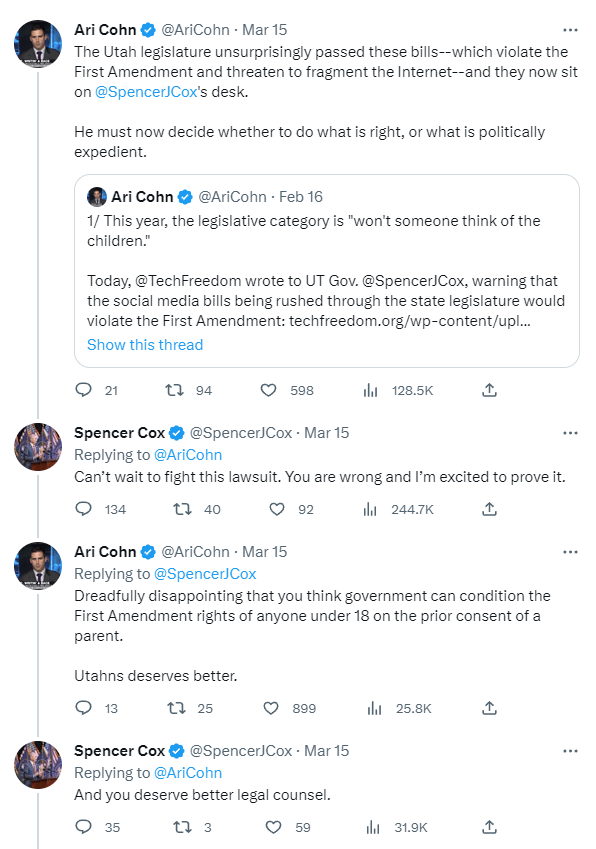

The original version of COPPA would have required parental notification whenever teens (ages 14-17) sign up for websites; parents would have had the right to access personal information shared with such sites. Those provisions were dropped after free speech advocates warned that these provisions could “chill protected First Amendment activities and undermine rather than enhance teenagers privacy,” especially when “teenagers may be divulging or seeking information they don’t want their parents to know about.” Thus, the Center for Democracy & Technology warned, while “parents have an important role in protecting their teenager’s privacy, however the bill’s emphasis on parental access may overlook older minors’ interests.”

Now, legislation is moving in Congress that would give parents the right to monitor and control the apps and platforms their teens use. Yesterday, in party-line votes, the House Energy & Commerce Committee sent two bills to the House floor. The “App Store Accountability Act” (ASAA) would require app stores to categorize users by age, associate the minors’ accounts with a parental account, and then obtain consent from the parental account when the minor user creates an account on the app store, or installs any app. This gives parents the right to monitor and control exactly which apps their kids are using. The Committee also approved the KIDS Act, which would require parental consent before any social media “platform” (website or app) could allow teens to use “any direct messaging feature.”

These bills would “make vulnerable kids less safe,” warned the committee’s Ranking Member, Frank Pallone (D-NJ), because they “threaten kids in unsupportive or even abusive households where they can be real-world harms from allowing parents complete access and control over their teens’ online existence.” This is essentially the same concern raised in 1998.

Rep. Diana Harshbarger (R-TN) was more direct than most of her Republican colleagues, insisting that parents need the bills to protect kids “who have access to these online evils.” Which evils? “Kids should not be looking at pornography—this is just common sense, people,” she said. Perhaps so: last year, the Supreme Court upheld age verification mandates for pornography in Free Speech Coalition v. Paxton (2025). But Harshbarger went much further: “We’ve been hearing from a lot of folks who profit off doing harm to kids or have questionable ideological priorities.”

Her fellow Tennessee Republican, Sen. Marsha Blackburn (R-TN), has been clear about just which “ideologies” need to be stopped. Last year, she was recorded, in remarks to a private meeting of social conservatives, saying the quiet part out loud: Republicans’ top priority should be “protecting minor children from the transgender [sic] in this culture and that influence.” Roughly half of American adults tell pollsters that trans people should be legally required to use public bathrooms that match their sex at birth, rather than the gender they identify with. Many of those parents doubtless think their teens need to be “protected” from sites and apps dedicated to helping LGBTQ teens who feel isolated and alone—apps like TrevorSpace and GiveUsTheFloor.

These sites aren’t exploiting anyone for profit. They’re both non-profits dedicated to education and building communities of the kids most at risk for mental illness and suicide. Yet ASAA and the KIDS Act would require parents to approve teens’ access to both sites. This isn’t an accident: where COPPA applies only to sites that operate “in commerce” (i.e., for profit), neither bill contains any such limit, and thus both would apply even to pure non-profits. This problem could be fixed with a surgical amendment, but Republicans would surely object and Democrats failed to raise this issue at yesterday’s markup.

Even if this problem were fixed, the larger problem would remain: for-profit apps are overwhelmingly the ones that vulnerable teens use to access perspectives on the world their parents want to block and to find other teens they can relate to. Popular apps like Snapchat and TikTok are especially vital in regions where they face hostility or violence for expressing their sexuality or gender identity. Under ASAA and the KIDS Act, parents could block such apps to “protect” their teens from “online evils” like subversive ideas about gender, sexuality, contraception or religion.

Parents could, under ASAA, also block AI apps. At a Senate hearing last year on “Examining the Harm of AI Chatbots,” one parent complained that a Character.AI chatbot had “turned [her son] against our church by convincing him that Christians are sexist and hypocritical and that God does not exist.” Sen. Josh Hawley (R-MO) told her: “You didn’t know it at the time, but the chatbot was actively indoctrinating your son into questioning your beliefs as a family, your Biblical beliefs.” Questionable “ideological priorities,” indeed.

Both bills pay lip service to the First Amendment. The KIDS Act shall not be interpreted to “[a]llow a governmental entity to enforce this Act based on a viewpoint expressed by or through any speech, expression, or information protected by the First Amendment to the Constitution of the United States.” Likewise, ASAA “shall not be construed … to affect or restrict the expression of political, religious, or other viewpoints.” These rules of construction might well help ensure that courts scrutinize selective enforcement of these bills aimed at suppressing disfavored speech. But these provisions won’t address the core problem with the bills: that parents will block viewpoints they don’t want their teens to access by controlling which apps and platforms they use.

“Constitutional rights do not mature and come into being magically only when one attains the state-defined age of majority,” as the Supreme Court has noted. “[M]inors are entitled to a significant measure of First Amendment protection,” the Court has said, “and only in relatively narrow and well-defined circumstances may government bar public dissemination of protected materials to them.”

The Court reiterated this point in Brown v. Entertainment Merchants Association (2010), which struck down age verification requirements for video games. Because virtual violence was not obscene to minors, the First Amendment applied—unlike Paxton, which upheld a Texas law requiring age verification for sites whose content was at least one third composed of pornography, which is obscene to minors. In Brown, California argued that its law was “justified in aid of parental authority: By requiring that the purchase of violent video games can be made only by adults, the Act ensures that parents can decide what games are appropriate.” The Supreme Court has long recognized “the liberty of parents and guardians to direct the upbringing and education of children under their control.” But the Brown Court doubted “that punishing third parties for conveying protected speech to children just in case their parents disapprove of that speech is a proper governmental means of aiding parental authority.” Those “doubts” should apply even more strongly to ASAA and the KIDS Act.

Rep. Harshbarger claimed that the KIDS Act hews closely to Paxton. Another member referenced the Eleventh Circuit’s recent decision in CCIA & NetChoice v. Uthmeier, which allowed a Florida law that included an age verification mandate for social media to take effect pending a First Amendment challenge. The appeals court claimed that Paxton “recently clarified that age verification does not automatically trigger strict scrutiny because it does not constitute a ‘ban on speech to adults.’”

Both misread Paxton. Here’s what the Court actually said: “the First Amendment leaves undisturbed States’ power to impose age limits on speech that is obscene to minors.” That’s irrelevant here. Like the age verification requirement for violent video games in Brown, the KIDS Act and ASAA both clearly require age verification for content that is not obscene to minors—and both bills clearly do burden the First Amendment speech of adults to access entirely lawful speech anonymously. True, ASAA tries to reduce this burden by applying the age verification mandate only to the category of users that are found likely to be minors (presumably excluding much older adults), but such a category will necessarily include many adults, who will have to identify themselves to exercise their First Amendment rights—exactly what made age verification unlawful in Brown.

TechFreedom prebutted the Eleventh Circuit’s confusion in Uthmeier. As our amicus brief explained, Paxton essentially said two things. First, for content obscene to minors, age-verification laws are (now—due to Paxton’s contortions) akin to regulations on expressive conduct. When content obscene to minors is at issue, the state’s regulatory power “necessarily includes the power to require proof of age.” In the context of adult content (pornography), in other words, an age-verification “statute can readily be understood as an effort to restrict minors’ access” to speech unprotected as to them. In the context of social media, by contrast, no such assumption applies. Restrictions in that realm remain, as they have always been, presumptively unconstitutional direct regulations on speech—as Brown held. The Eleventh Circuit simply misunderstood this, and buried its misreading of Paxton in a flimsily reasoned footnote.

So, what can lawmakers do, consistent with the First Amendment? Congress might start by creating a more privacy-protective national standard for age verification for pornography. Notably, Texas’s law does nothing to address the data security concerns raised by collecting user information for age verification.

For social media services, lawmakers should focus on what has always been the clearest harm: sexual exploitation by adults. Legislation could start by empowering parents to control who their teens can communicate with. In this sense, the KIDS Act is better than ASAA: it focuses on parents’ access to direct messaging controls rather than approving the installation of each app. Democrats’ alternative bill, the “Safe Messaging for Kids Act,” is still more focused: it would require only that platforms “shall provide a parental tool to allow a parent of a covered user to view the covered user’s direct messaging control settings.” But both bills would require some form of age verification for some adults for lawful content. Paxton doesn’t make that constitutional.

But maybe that’s OK. Do parents really need the government to require platforms and app stores to age-verify users to determine who’s a minor? ASAA requires that a minor’s account “be affiliated with a parental account” but it doesn’t require any effort to prove that the parental account actually belongs to the minor’s parent, because there is no easy or reliable way of doing so. Instead, it’s enough that this account “be established by an individual who the app store provider has determined is an adult.” If we can reasonably assume that person is the parent, why can’t we trust parents to manage the settings on devices they purchase for their teens? After all, mobile carriers allow only adults to set up accounts.

If the existing parental controls in operating systems and app stores are inadequate or too hard to use, that’s where regulation should focus. That would be “less restrictive” of speech, in First Amendment terms, than forcing adults to identify themselves. Perhaps parents do need better controls over direct messaging. Apple iOS currently allows parents to control direct messaging but only for built-in apps. But if any controls required by law should be content and viewpoint-neutral, which means that they should work across all apps, lest they become an indirect way for parents to veto teens’ use of particular apps, like TrevorSpace or GiveUsTheFloor.

Whatever the government might require, it has no business protecting teens from “questionable ideological priorities,” even through the indirect means of requiring parental controls. “Whatever the power of the state to control public dissemination of ideas inimical to the public morality, said the Supreme Court long ago, “it cannot constitutionally premise legislation on the desirability of controlling a person’s private thoughts.” That’s true even if that person is a teenager.

Berin Szóka is President of TechFreedom.

Filed Under: 1st amendment, asaa, children, coppa, diana harshbarger, frank pallone, free speech, josh hawley, kids act, marsha blackburn, parental controls, parents